K8S动态伸缩和失效转移

大约 8 分钟约 2419 字

K8S动态伸缩和失效转移

扩容和缩容

第一方式

kubectl scale deployment nginx-deploy --replicas=8

//使用命令行的方式进行扩容操作(缩容也是一样的道理)

kubectl get pod -o wide | grep nginx | wc -l

8

kubectl get deployments. nginx-deploy -o yaml

kubectl get deployments. nginx-deploy -o json

//也可以将nginx资源类型已json或yaml文件格式输出(其中也可查看到副本的数量)第二种方式

[root@master v3]# kubectl edit deployments nginx-deploy

//编辑名为nginx的资源类型

19 spec: //找到spec字段

20 progressDeadlineSeconds: 600

21 replicas: 6 //更改副本数量

//在保存退出的一瞬间就生效了

[root@master v3]# kubectl get pod -o wide | grep nginx | wc -l

6

//查看副本数量弹性伸缩

部署部署metrics-server

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

- --kubelet-insecure-tls

image: registry.aliyuncs.com/k8sxio/metrics-server:v0.5.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100编写hpa.yaml配置文件

apiVersion: apps/v1

kind: Deployment

metadata:

name: deployment-hpa-cpu

spec:

replicas: 1

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- name: myapp

image: nginx

ports:

- containerPort: 80

resources:

limits:

cpu: 20m

memory: 10Mi

requests:

cpu: 20m

memory: 10Mi

---

apiVersion: v1

kind: Service

metadata:

name: myapp

spec:

type: ClusterIP

selector:

app: myapp

ports:

- name: http

port: 80

targetPort: 80

---

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

name: deployment-hpa-cpu

spec:

maxReplicas: 6

minReplicas: 1

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: deployment-hpa-cpu

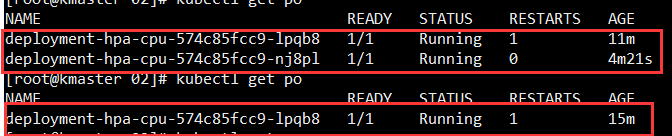

targetCPUUtilizationPercentage: 80部署hpa

kubectl apply -f hpa-cpu.yaml测试 HPA

# 开三个 k8s-master01 窗口,分别执行下面三条命令

watch kubectl get pods

watch kubectl top pods

ip=$(kubectl get svc | grep myapp | awk '{print $3}')

for i in `seq 1 100000`; do curl $ip?a=$i; done

while true; do wget -q -O- 10.98.114.85; done

失效转移

编写失效转移的文件

apiVersion: apps/v1

kind: Deployment

metadata:

name: my-nginx-deploy

spec:

replicas: 2

selector:

matchLabels:

app: my-nginx

template:

metadata:

labels:

app: my-nginx

spec:

tolerations:

- key: "node.kubernetes.io/unreachable"

operator: "Exists"

effect: "NoExecute"

tolerationSeconds: 2

- key: "node.kubernetes.io/not-ready"

operator: "Exists"

effect: "NoExecute"

tolerationSeconds: 2

containers:

- name: my-nginx-container

image: nginx

ports:

- containerPort: 80kubernetes节点失效后pod的调度过程:

0、Master每隔一段时间和node联系一次,判定node是否失联,这个时间周期配置项为 node-monitor-period ,默认5s

1、当node失联后一段时间后,kubernetes判定node为notready状态,这段时长的配置项为 node-monitor-grace-period ,默认40s

2、当node失联后一段时间后,kubernetes判定node为unhealthy,这段时长的配置项为 node-startup-grace-period ,默认1m0s

3、当node失联后一段时间后,kubernetes开始删除原node上的pod,这段时长配置项为 pod-eviction-timeout ,默认5m0s

停止掉工作节点看看是否发生转移

systemctl stop kubeletDeployment 高级配置

滚动更新策略

apiVersion: apps/v1

kind: Deployment

metadata:

name: my-nginx-deploy

spec:

replicas: 3

strategy:

type: RollingUpdate # 滚动更新(默认)

rollingUpdate:

maxSurge: 1 # 更新时最多多创建 1 个 Pod

maxUnavailable: 0 # 更新时最多 0 个 Pod 不可用(保证零停机)

selector:

matchLabels:

app: my-nginx

template:

metadata:

labels:

app: my-nginx

spec:

containers:

- name: nginx

image: nginx:1.24-alpine

ports:

- containerPort: 80# 重建策略(Recreate)— 先删除所有旧 Pod,再创建新 Pod

apiVersion: apps/v1

kind: Deployment

metadata:

name: batch-job-deploy

spec:

replicas: 3

strategy:

type: Recreate # 不适合需要零停机的服务

selector:

matchLabels:

app: batch-job

template:

spec:

containers:

- name: batch-job

image: my-batch-job:v2# 查看滚动更新状态

kubectl rollout status deployment/my-nginx-deploy

# 查看更新历史

kubectl rollout history deployment/my-nginx-deploy

# 回滚到上一版本

kubectl rollout undo deployment/my-nginx-deploy

# 回滚到指定版本

kubectl rollout undo deployment/my-nginx-deploy --to-revision=2

# 查看特定版本的详细信息

kubectl rollout history deployment/my-nginx-deploy --revision=2

# 暂停和恢复滚动更新

kubectl rollout pause deployment/my-nginx-deploy

# 修改配置(不会立即触发更新)

kubectl set image deployment/my-nginx-deploy nginx=nginx:1.25

kubectl rollout resume deployment/my-nginx-deploy # 恢复后一次性应用所有变更探针配置(健康检查)

apiVersion: apps/v1

kind: Deployment

metadata:

name: probe-demo

spec:

replicas: 3

selector:

matchLabels:

app: myapp

template:

metadata:

labels:

app: myapp

spec:

containers:

- name: myapp

image: myapp:1.0

ports:

- containerPort: 8080

# 存活探针(Liveness Probe)

# 失败后重启容器

livenessProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 30 # 容器启动后 30 秒开始检查

periodSeconds: 10 # 每 10 秒检查一次

timeoutSeconds: 5 # 超时时间 5 秒

failureThreshold: 3 # 连续失败 3 次后重启

successThreshold: 1 # 成功 1 次即视为健康

# 就绪探针(Readiness Probe)

# 失败后从 Service Endpoints 中移除

readinessProbe:

httpGet:

path: /ready

port: 8080

initialDelaySeconds: 5

periodSeconds: 5

timeoutSeconds: 3

failureThreshold: 3

# 启动探针(Startup Probe)

# 用于慢启动应用,启动完成后由 Liveness 接管

startupProbe:

httpGet:

path: /health

port: 8080

initialDelaySeconds: 0

periodSeconds: 5

failureThreshold: 30 # 最多等待 150 秒启动(30*5)# 查看探针状态

kubectl describe pod probe-demo-xxxxx | grep -A 5 "Liveness\|Readiness\|Startup"

# 查看事件

kubectl get events --field-selector involvedObject.name=probe-demo-xxxxx资源限制

apiVersion: apps/v1

kind: Deployment

metadata:

name: resource-demo

spec:

replicas: 3

selector:

matchLabels:

app: myapp

template:

spec:

containers:

- name: myapp

image: myapp:1.0

resources:

requests: # 调度时的最低需求

cpu: "250m" # 0.25 核

memory: "256Mi" # 256MB

limits: # 最大允许使用

cpu: "500m" # 0.5 核

memory: "512Mi" # 512MB

# 当 CPU 超过 limit 时被限流(不会杀死容器)

# 当内存超过 limit 时被 OOMKill# 查看 Pod 资源使用情况(需要 metrics-server)

kubectl top pod resource-demo-xxxxx

# 查看 Pod 的资源限制配置

kubectl describe pod resource-demo-xxxxx | grep -A 5 "Limits\|Requests"环境变量与配置管理

apiVersion: apps/v1

kind: Deployment

metadata:

name: config-demo

spec:

replicas: 2

selector:

matchLabels:

app: myapp

template:

spec:

containers:

- name: myapp

image: myapp:1.0

env:

- name: SPRING_PROFILES_ACTIVE

value: "prod"

- name: DB_HOST

valueFrom:

configMapKeyRef:

name: app-config

key: database.host

- name: DB_PASSWORD

valueFrom:

secretKeyRef:

name: db-secret

key: password

envFrom:

- configMapRef:

name: app-config

volumeMounts:

- name: config-volume

mountPath: /etc/app/config

volumes:

- name: config-volume

configMap:

name: app-config关键知识点

- 部署类主题的核心不是“装成功”,而是“稳定运行、可排障、可回滚”。

- 同一个服务通常至少要关注版本、目录、端口、权限、数据、日志和备份。

- Linux 问题经常跨越系统层、网络层、服务层和应用层。

- Kubernetes 主题必须同时看资源对象、调度行为、网络暴露和配置分发。

项目落地视角

- 把安装步骤补成可重复执行的清单,必要时写成脚本或配置文件。

- 把配置目录、数据目录、日志目录和挂载点明确拆开。

- 上线前检查防火墙、SELinux、时区、磁盘、系统服务和健康检查。

- 上线前检查镜像、命名空间、探针、资源限制、Service/Ingress 和配置来源。

常见误区

- 使用 latest 或未固定版本,导致环境不可复现。

- 只验证启动成功,不验证持久化、开机自启和故障恢复。

- 遇到问题先改配置而不是先看日志和依赖链路。

- 只会 apply YAML,不理解对象之间的依赖关系。

进阶路线

- 继续补齐 systemd、性能监控、安全加固和备份恢复。

- 把单机操作升级成 Docker、Kubernetes 或 IaC 方案。

- 建立标准化运维手册,包括巡检、扩容、回滚和灾备演练。

- 继续补齐调度、网络策略、存储、GitOps 和平台工程能力。

适用场景

- 当你准备把《K8S动态伸缩和失效转移》真正落到项目里时,最适合先在一个独立模块或最小样例里验证关键路径。

- 适合单机环境初始化、中间件快速搭建、测试环境验证和生产部署前准备。

- 当服务稳定性依赖端口、权限、目录、网络和系统参数时,这类主题会直接影响成败。

落地建议

- 固定版本号与镜像标签,避免“latest”带来的不可预期变化。

- 把配置、数据、日志目录拆开管理,并记录恢复步骤。

- 上线前确认端口、防火墙、SELinux、时区和磁盘空间。

排错清单

- 先查 systemctl、容器日志和应用日志,确认失败发生在哪一层。

- 检查端口占用、目录权限、挂载路径和网络连通性。

- 如果是新环境问题,优先对比与已知正常环境的差异。

复盘问题

- 如果把《K8S动态伸缩和失效转移》放进你的当前项目,最先要验证的输入、输出和失败路径分别是什么?

- 《K8S动态伸缩和失效转移》最容易在什么规模、什么边界条件下暴露问题?你会用什么指标或日志去确认?

- 相比默认实现或替代方案,采用《K8S动态伸缩和失效转移》最大的收益和代价分别是什么?